There is no shortage of AI guidance right now. Checklists, principles, policy statements, usage rules – they are being produced faster than most organisations can read them, let alone implement them. So why add another framework to the pile?

Because most of what’s out there has a problem: it’s already going out of date.

Guidance written around specific tools becomes redundant when those tools change. Rules built on the current regulatory landscape need rewriting when the landscape shifts. And long, complex frameworks that require training programmes and governance committees to implement don’t help the person sitting at their desk trying to work out whether it’s appropriate to use AI for the task in front of them right now.

ICE/BERG was built to be different on all three counts. Here’s what that means in practice.

Why most AI frameworks date quickly

Cast your mind back to early guidance on generative AI – even guidance from 18 months ago. Much of it was written around specific versions of specific tools, referenced regulatory positions that have since moved, or was framed around AI as something novel and unfamiliar that needed to be approached with particular caution.

The tools have moved on. The regulation has moved on. And in many organisations, so have staff attitudes – AI is no longer exotic, it’s routine.

Frameworks that were built around a particular moment in AI development tend to feel dated or irrelevant once that moment has passed. At worst, they create a false sense of security: the organisation has “done” AI guidance, without anyone checking whether that guidance still reflects the reality of how people are actually working.

What makes ICE/BERG different

ICE/BERG is not built around any particular tool, model, or platform. It doesn’t reference ChatGPT or Copilot or Gemini by name. It doesn’t depend on the current state of the EU AI Act or the ICO’s most recent guidance.

Instead, it’s built around something that doesn’t change: human behaviour and judgement.

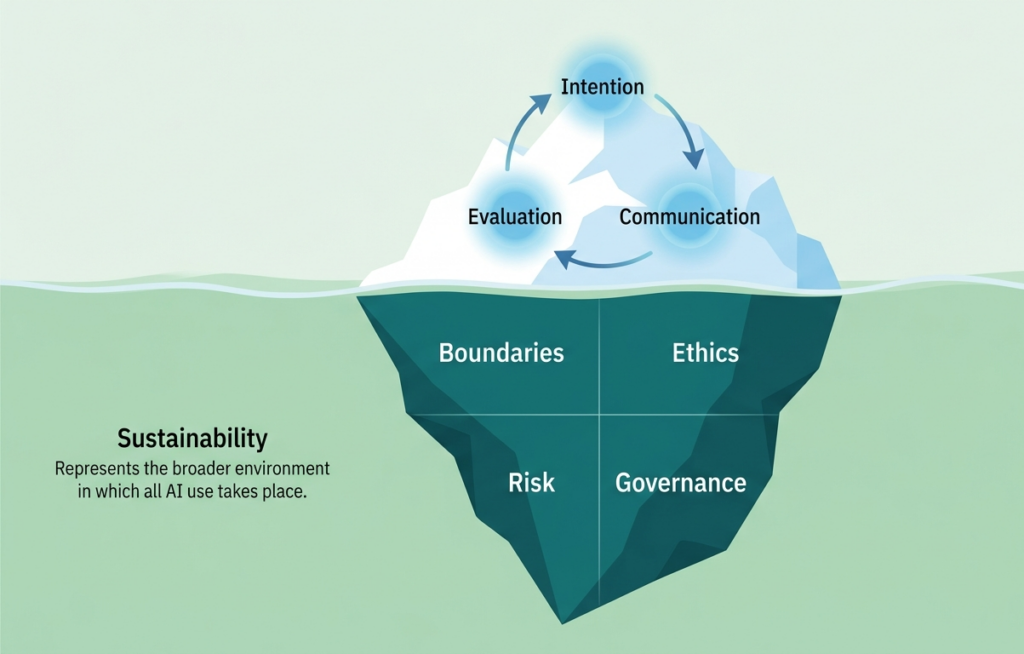

The three steps of the ICE cycle – Intention, Communication, Evaluation – are grounded in how good professional practice works, regardless of what technology is involved. Being clear about what you’re trying to achieve before you start. Communicating clearly and deliberately. Checking your outputs before you use them. These are not AI-specific behaviours. They’re the behaviours that distinguish thoughtful practitioners from careless ones, and they apply whether you’re using a large language model in 2026 or whatever comes after it.

The BERG – Boundaries, Ethics, Risk, Governance – is similarly durable. The questions it asks are not regulatory questions, though they connect to regulation. They’re questions of professional accountability: what are you permitted to do, who might be affected, what could go wrong, and who is responsible for the output? Those questions don’t expire.

And the sea of Sustainability surrounds all of it – because the environmental and capability implications of AI use aren’t going away, and any framework that ignores them is only telling part of the story.

Built for real people, not just policy documents

The other thing ICE/BERG is deliberately designed to do is be usable without a training programme.

The iceberg metaphor is not decorative. It’s a memory device. Once someone understands the structure – the cycle above the waterline, the foundations below, the sea surrounding it – they have a mental model they can reach for in any AI interaction, without needing to consult a document.

That matters particularly in higher and further education, where the people using AI tools include administrative staff, academics, finance teams, registry, student services – a huge range of roles with very different levels of familiarity with data protection, ethics, and governance. ICE/BERG gives all of them the same starting point without requiring them all to become compliance specialists.

How to start using it

You don’t need to implement ICE/BERG as a programme or build a training course around it. The simplest starting point is to apply it to your next AI interaction.

Before you start: what’s your Intention? What does a genuinely good outcome look like here?

As you work: are you Communicating clearly and deliberately, or just throwing text at the tool and seeing what comes back?

Before you use the output: have you Evaluated it properly? Not just read it, but genuinely checked it against your own professional knowledge?

And underneath all of that: are you clear on your Boundaries? Have you considered the Ethics? Do you understand the Risk? And do you know what Governance — disclosure, accountability, quality assurance — applies?

If you can answer those questions honestly, you’re already using AI more responsibly than most.

What’s next for ICE/BERG

The framework is live now at thedatagoddess.com/iceberg_framework. I presented it for the first time at SROC in April 2026 and the response confirmed what I’d hoped: it resonates because it’s practical, it’s memorable, and it meets people where they actually are.

I’ll be developing resources to support the framework over the coming months – including worked examples, self-assessment tools, and materials for organisations that want to embed it in their AI governance approach. If you’d like to be kept informed as those develop, sign up to the newsletter or get in touch directly.

And if your organisation is already thinking about how to support staff to use AI well — not just what they’re permitted to do, but how to do it thoughtfully — I’d be glad to talk.