ICE/BERG: An AI Literacy Framework for Sustainable Practice

Artificial intelligence is changing how we work in education. But most people using AI tools have never been given a framework for doing it well. ICE/BERG is designed to change that.

Developed by The Data Goddess, ICE/BERG is a practical AI literacy framework for people working in higher and further education. It gives you a structure for every AI interaction, a foundation of deeper considerations to underpin your practice, and a reminder that sustainable use matters for all of us.

How the framework works

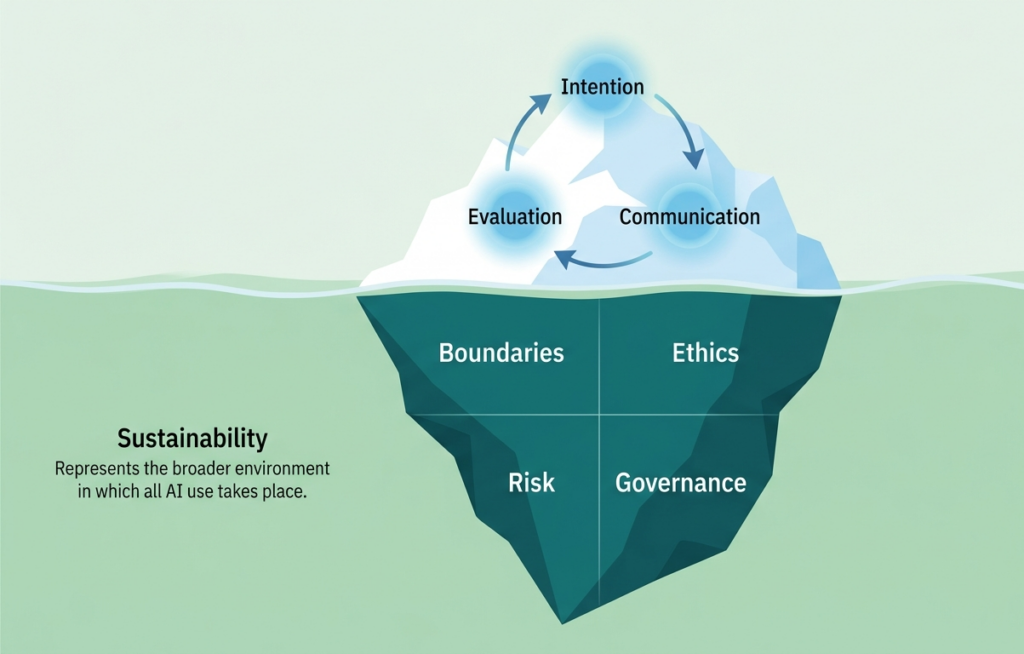

The ICE/BERG framework takes its name from the iceberg metaphor at its heart. The part most people see and focus on is the cycle above the waterline. But it is the larger mass beneath the surface, and the sea surrounding it, that determines whether AI use goes well or badly.

The ICE Cycle: what you do every time

ICE is the repeatable cycle at the centre of every AI interaction. Whether you are using AI for the first time or the hundredth, these three steps keep your work purposeful and effective.

Intention

Know what you want before you start. What does a good outcome look like? Being clear about your purpose shapes everything that follows, and stops you reaching for AI out of habit rather than genuine need.

Communication

Direct the AI clearly and deliberately. The quality of what you put in shapes the quality of what you get out. This is a skill that develops with practice, so treat every interaction as a chance to improve.

Evaluation

Do not just accept the output. Check it, question it, and apply your professional judgement before you use it. You are responsible for what you produce, whether AI was involved or not.

The BERG: the foundations beneath every interaction

Most people underestimate the iceberg. These four considerations sit beneath every ICE interaction and shape how well it goes. Ignoring them is how things go wrong.

Boundaries

Know what you can and cannot use AI for in your role and institution. Understand what information should never go into a prompt, and make sure you are working within your organisation’s policies.

Ethics

Consider fairness, transparency and bias. Think about the people affected by AI-assisted work and decisions, and whether the way you are using AI is something you could explain and stand behind.

Risk

Understand the limitations of AI tools. Know how much you can rely on outputs for a given purpose, and factor in what could go wrong before you choose your approach.

Governance

Be accountable for what you produce. Know what disclosure is needed, who owns the output, and how quality is assured before anything goes further.

The Sea: sustainability as the surrounding environment

The iceberg does not exist in isolation. Sustainability is the sea it sits within, and it shapes the conditions for all AI use. It asks a single overarching question: is what you are doing sustainable for you, your organisation, and the planet?

That means considering the environmental cost of AI tools, many of which have a significant and largely invisible carbon footprint. It also means thinking about whether your AI practice is building genuine capability and confidence over time, or quietly creating dependency. Sustainable AI literacy is about developing your own skills and judgement alongside the tools you use.

Who is this for?

ICE/BERG was developed with professional services teams in higher and further education in mind, but the principles apply wherever people are using AI at work. Whether you are just getting started or looking to build on existing practice, the framework gives you a common language and a shared structure to work from.

Bring ICE/BERG to your organisation

ICE/BERG is available as a workshop, webinar, or short course for teams and institutions. If you would like to explore how it could support your staff development programme, get in touch.